We have two types of tables in Teradata, SET and Multiset table and the main difference of these table is SET table doesn’t allow duplicate row whereas Multiset table allows it, as below:

A set table does not allow any duplicate row in it.For example-

Insert into set_table values (1,2,3);

Insert into set_table values (2,3,4);

Insert into set_table values (1,2,3); –Not allowed

A Multiset table allows duplicate rows in it. For example-

Insert into multiset_table values (1,2,3);

Insert into multiset_table values (2,3,4);

Insert into multiset_table values (1,2,3); –Allowed

There is performance impact involved with SET table. A SET table does not allow duplicate row hence for every new row inserted or updated in the table, Teradata checks on violation of uniqueness constraint for each new row (inserted or updated)this is called duplicate row checking which may overhead on the resources and may cause serious performance issue if number of records are in large number.

To avoid the above performance issue, it is advised that to define UPI, USI or any other uniqueness constraint on any column in SET table.With uniqueness constraint defined on column level, duplicate check won’t happen for entire row rather it will happen on uniqueness constraint hence performance will improve.

Note:- DUPLICATE ROW CHECKS grows exponentially with the number of records per Primary Index(PI) values.

If we insert data using “Insert into table1 select * from table2”, then SET table will filter out duplicate records automatically and there will be no duplicate row error.

In case we insert data using “insert into table2 values” clause, it will check duplicate records and trough error if any duplicate records is there.

Here is an example which I have encountered with SET table-

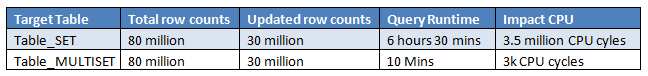

A SET target table was defined with NUPI(Non-Unique primary index) and 80 million records and the query was trying to update 30 million rows in it. As it is defined as SET table, for each of 30 million rows Teradata was performing DUPLICATE ROW CHECK against the target table, just imagine what would be the impact on the system in such scenario.

If you change the table structure as MULTISET and incorporate group by clause in the source table, performance impact can be avoided as Teradata will bypass the duplicate row checks.

Below is the performance result for the update statement-

From the above example we get to know, how wrong choice of table structure can impact the system.

It is highly recommended to define target table as Multiset table if we are using any GROUP BY or QUALIFY clause on the source table.